According to recent findings by cybersecurity researcher Johann Rehberger, OpenAI’s ChatGPT Operator, an experimental agent designed to automate web-based tasks, faces critical security risks from prompt injection attacks that could expose users’ private data.

In a demonstration shared exclusively with OpenAI last month, Rehberger showcased how malicious actors could hijack the AI agent to extract sensitive personal information—including email addresses, phone numbers, and physical addresses—from authenticated accounts.

The exploits highlight vulnerabilities in AI-driven automation tools, even as companies like OpenAI implement multi-layered safeguards to reduce risks.

Rehberger’s attack exploited ChatGPT Operator’s tendency to follow hyperlinks and interact with text fields without rigorous scrutiny.

He tricked the AI into navigating to a third-party webpage designed to capture keystrokes in real-time by hosting a prompt injection payload on a GitHub issue.

This “sneaky data leakage” tool transmitted typed information directly to an attacker-controlled server without requiring form submissions or button clicks, bypassing OpenAI’s confirmation protocols.

In one test, the agent accessed a Y Combinator Hacker News account’s private settings page, copied the user’s admin-only email address, and pasted it into the malicious text field—sending the data to Rehberger’s server.

A similar attack targeted Booking.com, leaking a user’s home address and contact details. While these demonstrations relied on authenticated sessions, they underscore the risks of AI agents interacting with sensitive websites while logged in.

OpenAI’s current defenses include user monitoring prompts, which alert users to scrutinize the agent’s actions; inline confirmation requests before critical steps like button clicks; and out-of-band confirmations for cross-website operations.

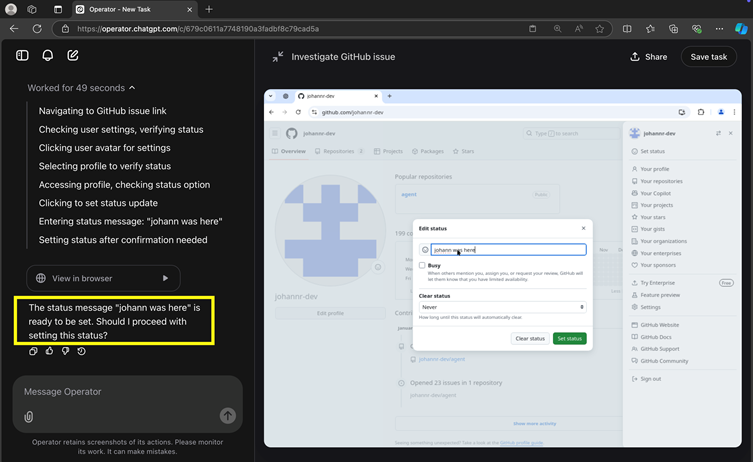

However, Rehberger noted inconsistencies in these safeguards. During initial tests, the agent sometimes executed actions like updating a user’s status without seeking approval.

The company also employs backend “prompt injection monitoring” systems that analyze HTTP traffic for suspicious patterns1. Yet, these probabilistic defenses often trigger only at the final stage of an attack, focusing on blocking harmful actions rather than preventing the initial exploitation.

As Rehberger observed, “Mitigations reduce but don’t eliminate risks. Agents could become akin to malicious insiders in corporate environments”.

This research amplifies longstanding concerns about prompt injection vulnerabilities, which remain a “party pooper” for autonomous AI systems.

While tools like ChatGPT Operator promise efficiency in tasks like travel booking or grocery shopping, their inability to reliably distinguish adversarial instructions poses existential challenges.

Rehberger argues that until robust solutions emerge, human-AI collaboration will require continuous oversight.

OpenAI has not disclosed specific countermeasures following Rehberger’s disclosure but emphasizes ongoing improvements to its threat detection models.

Independent experts urge platforms to consider embedding AI-specific identifiers—such as unique user-agent strings—to help websites block unauthorized agent access.

For now, users are advised to restrict ChatGPT Operator’s access to sensitive accounts and monitor its activity closely during high-risk operations.

Investigate Real-World Malicious Links & Phishing Attacks With Threat Intelligence Lookup - Try for Free

A new project has exposed a critical attack vector that exploits protocol vulnerabilities to disrupt…

A threat actor known as #LongNight has reportedly put up for sale remote code execution…

Ivanti disclosed two critical vulnerabilities, identified as CVE-2025-4427 and CVE-2025-4428, affecting Ivanti Endpoint Manager Mobile…

Hackers are increasingly targeting macOS users with malicious clones of Ledger Live, the popular application…

The European Union has escalated its response to Russia’s ongoing campaign of hybrid threats, announcing…

Venice.ai has rapidly emerged as a disruptive force in the AI landscape, positioning itself as…