In a significant move to bolster the security of generative AI systems, Microsoft has announced the release of an open automation framework named PyRIT (Python Risk Identification Toolkit).

This innovative toolkit enables security professionals and machine learning engineers to proactively identify and mitigate risks in generative AI systems.

Collaborative Effort in AI Security

Microsoft emphasizes the importance of collaborative efforts in security practices and the responsibilities associated with generative AI. The company is dedicated to providing tools and resources that support organizations worldwide in responsibly innovating with the latest AI technologies.

PyRIT, along with Microsoft’s ongoing investments in AI red teaming since 2019, underscores the company’s commitment to democratizing AI security for customers, partners, and the broader community.

The Evolution of AI Red Teaming

AI red teaming is a complex, multistep process that requires an interdisciplinary approach. Microsoft’s AI Red Team consists of experts in security, adversarial machine learning, and responsible AI, drawing on resources from across the Microsoft ecosystem.

This includes contributions from the Fairness Center in Microsoft Research, AETHER (AI Ethics and Effects in Engineering and Research), and the Office of Responsible AI.

Over the past year, Microsoft has proactively red-teamed several high-value generative AI systems and models before their release to customers.

This experience has revealed that red teaming generative AI systems distinctly differ from traditional software or classical AI systems. It involves probing security and responsible AI risks simultaneously, dealing with the probabilistic nature of generative AI, and navigating the varied architectures of these systems.

Go deep dive into malware files, networks, modules, and registry activity and more.

More than 300,000 analysts use ANY.RUN is a malware analysis sandbox worldwide. Join the community to conduct in-depth investigations into the top threats and collect detailed reports on their behavior..

Introducing PyRIT

PyRIT was initially developed as a set of scripts used by the Microsoft AI Red Team as they began red teaming generative AI systems in 2022. The toolkit has evolved to include features that address various risks identified during these exercises.

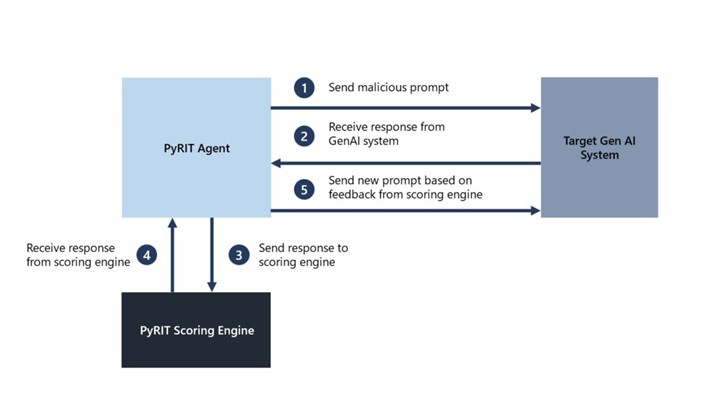

PyRIT is now a reliable tool that increases the efficiency of red teaming operations, allowing for the rapid generation and evaluation of malicious prompts and responses.

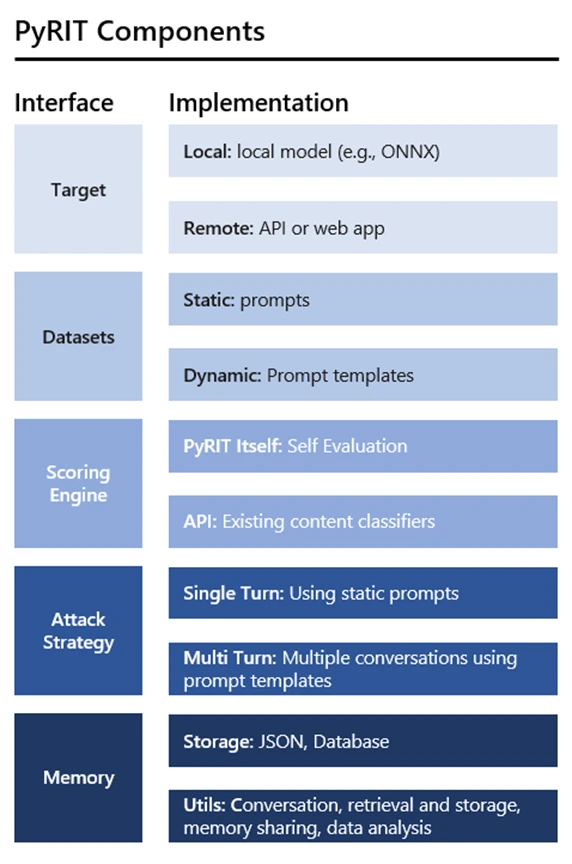

The toolkit is designed with abstraction and extensibility in mind, supporting a variety of generative AI target formulations and modalities. PyRIT integrates with models from Microsoft Azure OpenAI Service, Hugging Face, and Azure Machine Learning Managed Online Endpoint.

It also includes a scoring engine that can use classical machine learning classifiers or leverage an LLM endpoint for self-evaluation. It also supports single and multi-turn attack strategies.

Moving Forward with PyRIT Components

Microsoft encourages industry peers to explore PyRIT and consider how it can be adapted for red teaming their own generative AI applications. To facilitate this, Microsoft has provided demos and is hosting a webinar in partnership with the Cloud Security Alliance to demonstrate PyRIT’s capabilities.

| PyRIT may be used as a web service or incorporated in apps to formulate generative AI targets. Text inputs are originally supported, but more modalities can be added. Microsoft Azure OpenAI Service, Hugging Face, and Azure Machine Learning Managed Online Endpoint models work smoothly with the toolkit. This integration makes PyRIT a versatile AI red team bot that can interact in single and multi-turn scenarios. |

| The datasets component of PyRIT lets security experts choose a static collection of malicious questions or a dynamic prompt template to test the system. These templates enable encoding many damage categories, including security and responsible AI failures, and automated harm investigation across all categories. PyRIT’s initial version contains prompts with popular jailbreaks to assist people get started. |

| PyRIT’s scoring engine evaluates target AI system outputs using a standard machine learning classifier or an LLM endpoint for self-evaluation. Additionally, Azure AI Content filters may be used via API. |

| Two attack techniques are supported by the toolkit. Sending jailbreak and harmful suggestions to the AI system and rating its reaction is the single-turn strategy. The multi-turn approach responds to the AI system depending on the starting score, creating more intricate and realistic adversarial behavior. |

| To analyze intermediate input and output interactions later, PyRIT stores them in memory. This feature allows for more multi-turn talks and the sharing of explored topics. |

| Microsoft invites industry colleagues to use PyRIT to red team generative AI solutions. Microsoft and Cloud Security Alliance are holding a webinar to highlight PyRIT’s capabilities. Microsoft’s plan to map, measure, and manage AI risks promotes a safer, more responsible AI environment. |

This release represents a significant step in Microsoft’s strategy to map, measure, and mitigate AI risks, contributing to a safer and more responsible AI ecosystem.

For more information on Microsoft’s AI Red Team and resources for securing AI, interested parties can watch Microsoft Secure online and learn about product innovations that enable the safe, responsible, and secure use of AI.